I deleted my blog - damn

Again a stupid mistake from my side. This is the downside when self-hosting. You do will do mistakes. This is one of them.

And again. My site was down for about 30 ish minutes. Why?

Because I did something stupid again ?

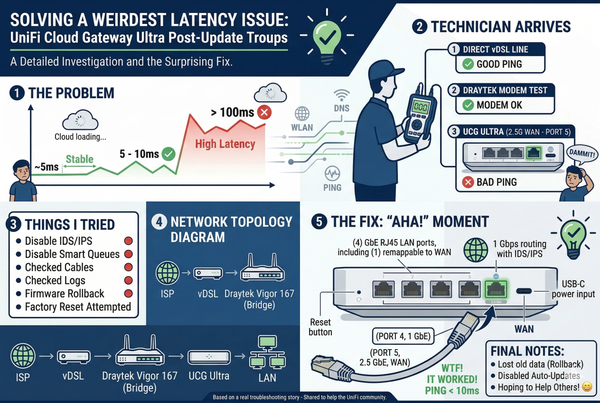

Tuesday (16.11.2021) night around midnight I wanted to do one last thing after playing around with a discourse install on my server. I just wanted to clean up the mess I have created with the docker volumes and containers which were not working properly. So I used CTRL + R to find a command and did hit ENTER faster than I should have.

I could see a list of all successfully deleted docker volumes. ALL THE VOLUMES! The computer did what I told the computer to do...

Everything was gone. EVERYTHING. My blog, my self-hosted analytics. Okay, that was it. So not too much. But still, it was gone!

Luckily I am running on Hetzner Cloud and had enabled backups for my server which I provision with their terraform provider. This feature will create daily backups and keep them for 7 days. Plenty for my use case.

So I had to create a server from the last backup which was about 15 hours old. I only lost one article. Again I was lucky. I just published this article and therefore had it in my e-mail inbox anyway. So nothing really lost there.

The restore took about 20 minutes. After that, I just booted the server and copied the /var/lib/docker/volumes/ folder to my live server which had no volumes anymore. That worked fine for this ghost blog and it was up and running again.

For some reason, this did not work for my analytics tool umami. But it was already 1 am and I did just go to bed. Analytics could be dealt with the next day.

So the next day I had to docker exec into the container on the backup server and dump the Postgres database. After importing the database dump to the live server everything was working again. I just lost a few hours of analytics data.

This is actually not a big deal, but I really like to check how many people are reading my blog. It feels nice to see that at least some people like to read my shenanigans.

What did I learn from this? I need to have a better backup mechanism. I am currently thinking about a simple script that will do the following:

- dump ghost blog database

- copy ghost content folders

- dump umami database

- dump plausible database

- tar that up

- move that to some S3

With that, I could control how often I want to have the backup. S3 could do the versioning and housekeeping like deleting older backups automatically. So no custom logic is needed. Need to think and play with this a little. When I have something ready I will share it here.

So, what do we learn?

Backups. Just do backups. Everybody will do mistakes like these because we are all human. Enabling the backup is just a click or in my case a boolean in the terraform manifest. Do it and sleep well!

If you want to run your own servers as well and like to have them in Germany and good pricing I can recommend Hetzner Cloud*.

They also have a good terraform integration, which you definitely should try. You can spin up and down a testing lab for learning for a few cents.